Rating Systems: What does it mean?

The lack of standardized rating systems sucks. There’s so many ways to rate something that you begin to lose all understanding what what something means. Even systems designed to simplify things muddy the waters, because they often lack the granularity that allows for meaning to be derived. I’ll offer a guide…

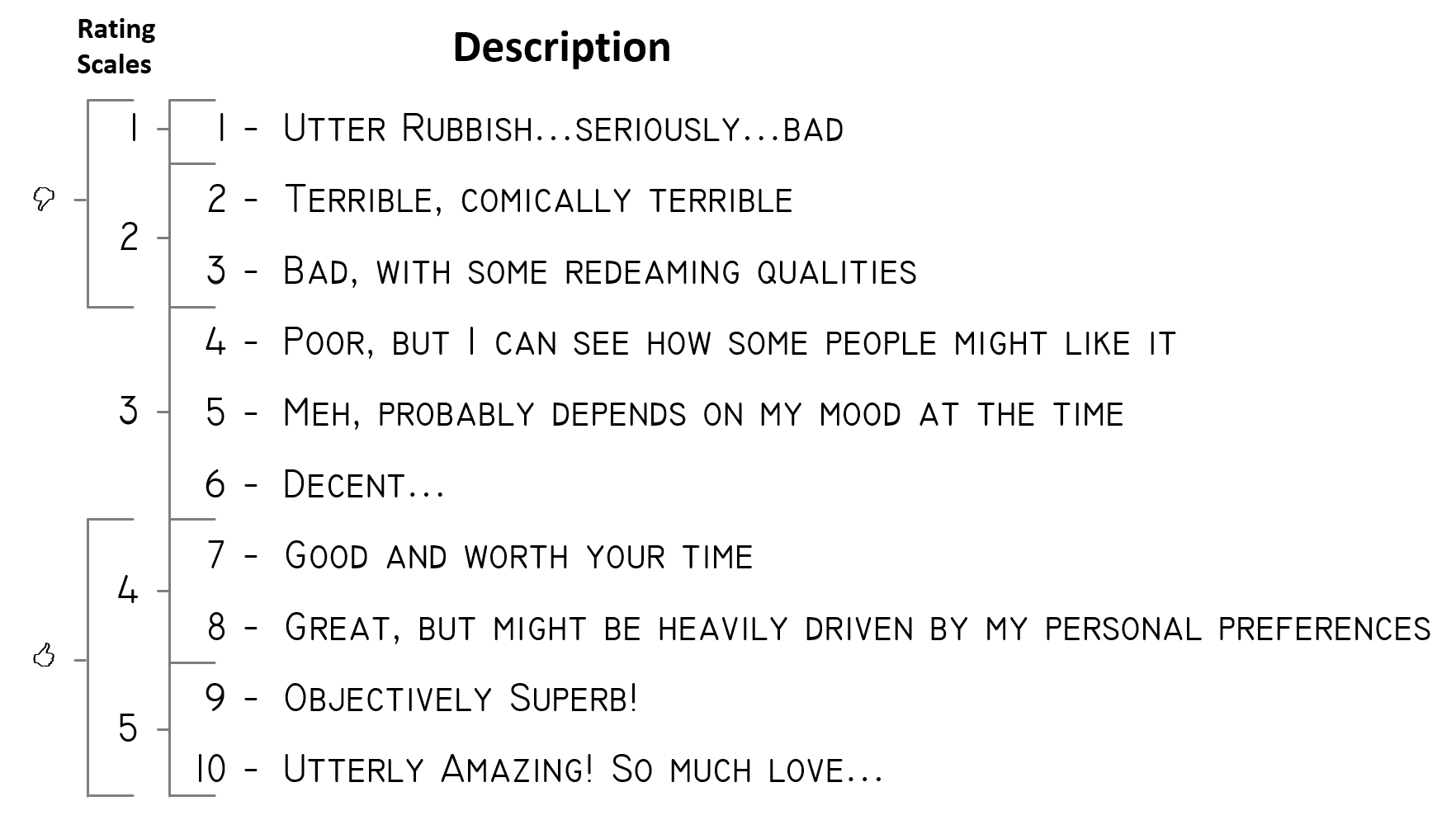

TL/DR - After distilling all other rating systems, this is where I sit when I rate things.

Current Systems

There’s a ton of rating systems out there but, in most cases, they follow a simple numerical ranking to the need. Ten point scales, 5 star rating, two thumbs up/down, or something similar. Looking at my homepage you’ll find that I like tracking and rating the things in my life. It is a little bit of data collection that I may use for later.

Points Model

Goodreads, Amazon, Uber, Lyft, etc. all have 5 star rating systems. Trakt, IMDB, and Pain use a 10 point scale. This is the the type that can have the most granularity.

Binary Model

Some sites, i.e. Netflix, YouTube and Rotten Tomatoes, use a variation on quantity good vs quantity bad. Count the good, count the bad, and derive a level of “goodness” as the defined rating. It lacks the question of a middle ground, where you don’t want to give it a negative rating, but neither a good rating.

Like Model

Others ignore the bad, i.e. Facebook and Medium, in that you can “Like” or “Clap” or do nothing. So popularity is only derived by happiness with the post, ignoring bad and indifference…almost penalizing the post when indifference occurs.

Issues with the Systems

Like Model Reinforces Bubbles

Let’s start with the Like-model. The only option is for a positive review or nothing at all. This has a huge issue with ignoring the negative side. This type of rating system tends to reinforce filter bubbles as it is then increasingly difficult to decifer if something was bad (or contrary) or simply ignored. This seems like the most user friendly style because you either act “Like/Clap” or don’t, but it simply echoes your preferences back to you and does not allow for understanding of things truly contary. There is something very enticing about this form though. As Medium has found out, there’s a large amount of gratification for the author in a simple pat on the back. Ignoring the negative, even a small amount of positive reinforcement goes a long ways in continuing to provide content.

Binary Model Ignores the Ignored

Expanding that limited aproach is the Binary-model. This is most commonly realized in the Thumbs-Up and Thumbs-Down appraoch. Where this fixes the issue with identifying bad material, it also has difficult functional problem. The Binary-model isn’t actually binary at all…what do you do with all the ignored ratings? Rotten Tomatoes doesn’t have this issue because it is truly binary, as there is no “I reviewed it, but didn’t supply an opinion…”. YouTube has relegated Thumbs Up/Down to second class citizens to Views counting. Ignoring any value proposition, simply showing the popularity.

Netflix is interesting because I don’t know what they do with their model. I assume it’s not binary as they are definitely harvesting view with the ratings, so they know how many people ignore the rating. But my personal experience (and one echoed with people I’ve discussed this with) is that since the moved away from the 5-star model, I’ve stopped rating shows all together.

Points Model Needs Context

The Points-model is what the 5-star, X-to-10, and others fall into. When rating content, this is my favorite model. It allows the granularity to understand the level of goodness/badness of a item.

Five star systems have good granularity, allowing the bad, but is often not enough to fully understand the issue. I like when systems overcome this with allowing half stars, this essentially puts it on a 10 point scale. And that’s the best one.

I’m a big fan of the 10 point scale. It allows me to put meaning into a rating, if anyone wants my opinion on something it is then well understood. Trakt and IMDB both use this and it works for them. I’ve found that the average ratings on these services tend to be excellent in telling me if I’ll like something. There is a range of uncertanty, but normally I can go into that understanding that it could teter the other way.

Applying Meaning to The Points Model

The problem with all these systems is: “What does it mean!?!?” Let’s take the 10 point system. 10 is awesome, 1 is awful…that’s known. But where’s the middle? Some may say 5, but that’s not always the case. Think about a decision on whether to play a certain video game. If the game gets a 1 you are definitely going to avoid, but does a 2 mean you’ll give it a shot? What about 3 and 4 for that matter? Normally, only if it gets a 5 will you think…well maybe. So now you have 7-8 point scale…and that will explode brains.

So an average game is probably sitting in the 6-7 point range instead of the 5. But that’s where context is missing. What does 5 mean? Does it mean Average? How do you know this? How can we help?

Trakt’s Rating Definitions

My favorite rating system is from Trakt. They apply context to each number. Here’s Trakt’s current explanation:

- Weak Sauce :(

- Terrible

- Bad

- Poor

- Meh

- Fair

- Good

- Great

- Superb

- Totally Ninja!

Now there’s context. As you can see, they place average at 6-to-7 with the Fair/Good distinction…but also allows for some new interpretations.

I like the list. I take off “Weak Sauce :(” and “Totally Ninja!” and replace them with something like “Turned it off” and “Would pay to watch each time over and over again.”, respectively. I think those explanations are a bit more descriptive. But there’s something interesting here…a show/movie that gets a rating of 2 or 3 may still be compelling… In this context there could be a “I know it’s bad, but was fun to watch in that bad movie/show context”, approach taken to the show.

Reducing to 5 Star Confuses Context

Compressing this down into a 5 star system is hard…You lose that 2-4 granularity. I find it hard to give a 2 star rating to something. If it’s bad, it’s bad (1) if it’s average it gets the three (3), four (4) is good, and five (5) is fantastic. Additionally, because I consume things with a known quality rating based on others reviews, I find myself hitting that 4 over and over. Suddenly my rating is difficult to understand. Was it above average or fantastic except for a couple issues?

A bit more granularity allows for that to be derived. And placing a context to each rating like Trakt does only puts everyone on even terms.

My Default Rating Context

For posterity sake, here’s my rating system when you see my reviews for anything. For the most part you can reference this and know where I stand on anything. Additionally, the “Thumbs Up” also indicates how I handle the “Like/Clap” feature that some sites have.

How to Understand My Ratings

That’s it, what do you think?

Title Photo by Mark Tegethoff